With 2014 now upon us I wanted to take some time to reflect on the past year. It was an incredible and chaotic year but it was also a lot of fun! Here are some of the things that I was involved in this past year.

MVP Summits

This year there were two MVP summits. One in February and another at the end of November. MVP Summits are such great opportunities on a few different levels. First off you get to hear about what is in the pipeline from product groups but you also get to network with your industry peers. I find that these conversations are so incredibly valuable and the friendships that are developed are pretty incredible. Over time I have developed an incredible world wide network with so many quality individuals it is actually mind blowing.

(Pictures from February MVP Summit)

At the attendee party at Century Link stadium

Dinner with Product Group and other MVPs

(Pictures from November Summit)

At Lowell’s in the Pike Place Market in Seattle for our annual Integration breakfast prior to the SeaHawk’s game.

A portion of the Berlin Wall with Steef-Jan at Microsoft Campus

Dinner at our favourite Indian restaurant in Bellevue called Moksha.

At Steef Jan’s favorite Donut shop in Seattle prior to the BizTalk Summit.

Speaking

This year I had a lot of good opportunities to speak and share some of the things that I have learned. My first stop was in Phoenix at the Phoenix Connected Systems Group in early May.

The next stop was in Charlotte, North Carolina where I presented two sessions at the BizTalk Bootcamp event. This conference was held at the Microsoft Campus in Charlotte. Special thanks to Mandi Ohlinger for putting it together and getting me involved.

Soon after the Charlotte event I was headed to New York City where I had the opportunity to present at Microsoft Technology Center (MTC) along side the Product group and some MVPs to some of Microsoft’s most influential customers in New York City.

The next stop on the “circuit” was heading over to Norway to participate in the Bouvet BizTalk Innovation Days conference. This was my favourite event for a few reasons;

- I do have some Norwegian heritage so it was a tremendous opportunity to learn about my ancestors.

- Another opportunity to hang with my MVP buddies from Europe

- I don’t think there is a more passionate place on the planet about integration than in Scandinavia (Sweden included). Every time I have spoke there I am completely overwhelmed by the interest in Integration in that part of the world.

Special thanks to Tord Glad Nordahl for including me in this event.

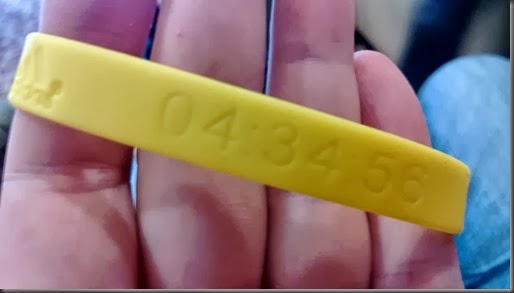

After the Norway event I had the opportunity to participate in the 40th Annual Berlin Marathon with my good friend Steef Jan Wiggers. This was my second Marathon that I have run and it was a tremendous cultural experience to run in that city. I also shaved 4 minutes off of my previous time from the Chicago marathon so it was a win-win type of experience.

The last speaking engagement was in Calgary in November. I had the opportunity to speak about Windows Azure Mobile Services, Windows Azure BizTalk Services and SAP integration at the Microsoft Alberta Architect forum. It was a great opportunity to demonstrate some of these capabilities in Windows Azure to the Calgary community.

Grad School

2013 also saw me returning to School! I completed my undergrad degree around 12 years ago and felt I was ready for some higher education. I have had many good opportunities for career growth in my career but always felt that it was my technical capabilities that created those leadership and management opportunities. At times I felt like I didn’t have a solid foundation when it came to running parts of an IT organization. I felt that I could benefit from additional education. I don’t ever foresee a time when I am not involved in Technology. It is my job but it is also my hobby. With this in mind I set out to find a program that focused on the “Management of Technology”. I didn’t want a really technical Master’s program and I also didn’t want a full blown Business Master’s program. I really wanted a blend of these types of programs. After some investigation I found a program that really suited my needs. The program that I landed on was Arizona State University’s MSIM (Masters of Science in Information Management) through the W.P. Carey School of Business.

In August, 2013, I headed down to Tempe, Arizona for Student Orientation. During this orientation myself and 57 other students in the program received detailed information about the program. We also got assigned into groups of 4 or 5 people who you will be working closely with over the course of the 16 month program. There are two flavors of the program. You can either attend in-person at the ASU campus or you can participate in the on-line version of the program. With me living in Calgary, I obviously chose the remote program.

One thing that surprised me was the amount of people from all over the United States that are in this program. There are people from Washington St, Washington DC, Oregon, California, Colorado, New Mexico, Texas, Indiana, New York, Georgia, Vermont, Alabama, Utah and of course Arizona in the program. When establishing groups, the school will try to place you in groups within the same time zone. My group consists of people from Arizona which has worked out great so far. This is really a benefit of the program as everyone brings a unique experience to the program which has been really insightful.

I just finished up my 3rd course (of 10) and am very pleased with choosing this program. Don’t get me wrong, it is a lot of work but I am learning alot and really enjoying the content of the courses. The 3 courses that I have taken so far are The Strategic value of IT, Business Process Design and Data and Information Management. My upcoming course is on Managing Enterprise Systems which I am sure will be very interesting.

If you have any questions about the program feel free to leave your email address in the comments as I am happy to answer any questions that you have.

Books

Unfortunately this list is going to be quite sparse compared to the list that Richard has compiled here, but I did want to point out a few books that I had the opportunity to read this past year.

Microsoft BizTalk ESB Toolkit 2.1

In 2013, it was a slow year for new BizTalk books. In part due to the spike in books found in 2012 and also the nature of the BizTalk release cycle. However we did see the Microsoft BizTalk ESB Toolkit 2.1 book being released by Andres Del Rio Benito and Howard Edidin.

This book comes in Packt Publishing’s new shorter format. Part of the challenge with writing books is that it takes a really long time to get the product out. In recent years Packt has tried to shorten this release cycle and this book falls into this new category. The book is approximately 130 pages long and is the most comprehensive guide of the ESB toolkit available. I have not seen another resource where you will find as much detailed information about the toolkit.

Within this book you can expect to find 6 chapters that discuss:

- ESB Toolkit Architecture

- Itinerary Services

- ESB Exception Handling

- ESB Toolkit Web Services

- ESB Management Portal

- ESB Toolkit Version 2.2 (BizTalk 2013) sneak peak.

If you are doing some work with the ESB toolkit and are looking for a good resource then this a good place to start. (Amazon)

The Phoenix Project: A Novel about IT, DevOps and Helping your Business Win

I was made aware of this book via a Scott Gu tweet and boy it was worth picking up. This book reads like a novel but there are a lot of very valuable lessons embedded within the book. This book was so relevant to me that I could have sworn that I have worked with this author before because I had experienced so much of what was in this book. If you are new to a leadership role or are struggling in that role this book will be very beneficial to you. (Amazon)

Adventures of an IT Leader

This is a book that I read as part of my ASU Strategic Value of IT course. It is similar in nature to the Phoenix Project and also reads like a novel. In this case a Business Leader has transitioned into a CIO position. This book takes you through his trials and tribulations and really begs the question is “IT Management just about Management”. (Amazon)

The Opinionated Software Developer: What Twenty-Five Years of Slinging Code Has Taught Me

This was an interesting read as it describes Shawn Wildermuth’s experiences as a Software Developer. It was a quick read but was really interesting to learn about Shawn’s experiences throughout his career. I love learning about what other people have experienced in their careers and this provided excellent insight into Shawn’s. (Amazon)

Hard Facts, Dangerous Half-Truths, and Total Nonsense: Profiting from Evidence-based Management

Another book from my ASU studies but this one was interesting. It does read more like a text book but the authors are very well recognized for their work in Business Re-engineering space. I think the biggest thing that I got out of this book was to not lose sight of evidence-based management. All too often technical folks use their previous experiences to dictate future decisions. For example at a previous company or client a particular method worked. However taking this approach to a new company or client provides you no guarantees that it will work again. This book was a good reminder that a person needs to stick to the facts when making decisions and to not rely (too much) on what has worked (or hasn’t) in the past. (Amazon)

2014

Looking ahead I expect 2014 to be as chaotic and exciting as 2013. It has already gotten off to a good start with Microsoft awarding me with my seventh consecutive MVP award in the Integration discipline. I want to thank all of the people working in the Product Group, the Support Group and in the Community teams for their support. I also want to thank my MVP buddies who are an amazing bunch of people that I really enjoy learning from.

Also, look for a refresh of the (MCTS): Microsoft BizTalk Server 2010 (70-595) Certification Guide book. No the exam has not changed, but the book has been updated to include BizTalk 2013 content that is related to the Microsoft BizTalk 2013 Partner competency exam. I must stress that this book is a re-fresh so do not expect 100% (of anywhere near that) of new content.